Computer Vision: Image Detection for Health Care

This tutorial can be found on the Wallaroo Tutorials Github Repository.

Image Detection for Health Care Computer Vision Tutorial Part 02: External Data Connection Stores

The first example in this tutorial series showed selecting from 10 random images of cells and detecting mitochondria from within them.

This tutorial will expand on that by using a Wallaroo connection to retrieve a high resolution 1536x2048 image, break it down into 256x256 “patches” that can be quickly analyzed.

Wallaroo connections are definitions set by MLOps engineers that are used by other Wallaroo users for connection information to a data source. For this example, the data source will be a GitHub URL, but they could be Google BigQuery databases, Kafka topics, or any number of user defined examples.

Tutorial Goals

This tutorial will perform the following:

- Upload and deploy the

mitochondria_epochs_15.onnxmodel to a Wallaroo pipeline. - Create a Wallaroo connection pointing to a location for a high resolution image of cells.

- Break down the image into 256x256 images based on the how the model was trained to detect mitochondria.

- Convert the images into a numpy array inserted into a pandas DataFrame.

- Submit the DataFrame to the Wallaroo pipeline and use the results to create a mask image of where the model detects mitochondria.

- Compare the original image against a map of “ground truth” and the model’s mask image.

- Undeploy the pipeline and return the resources back to the Wallaroo instance.

Prerequisites

Complete the steps from Mitochondria Detection Computer Vision Tutorial Part 00: Prerequisites and Mitochondria Detection Computer Vision Tutorial Part 01.

Mitochondria Computer Vision Detection Steps

Import Libraries

The first step is to import the necessary libraries. Included with this tutorial are the following custom modules:

tiff_utils: Organizes the tiff images to perform random image selections and other tasks.

import json

import IPython.display as display

import time

import matplotlib.pyplot as plt

from IPython.display import clear_output, display

from lib.TiffImageUtils import TiffUtils

import tifffile as tiff

import pandas as pd

import wallaroo

from wallaroo.object import EntityNotFoundError, RequiredAttributeMissing

import numpy as np

from matplotlib import pyplot as plt

import cv2

from tensorflow.keras.utils import normalize

tiff_utils = TiffUtils()

# ignoring warnings for demonstration

import warnings

warnings.filterwarnings('ignore')

Open a Connection to Wallaroo

The next step is connect to Wallaroo through the Wallaroo client. The Python library is included in the Wallaroo install and available through the Jupyter Hub interface provided with your Wallaroo environment.

This is accomplished using the wallaroo.Client() command, which provides a URL to grant the SDK permission to your specific Wallaroo environment. When displayed, enter the URL into a browser and confirm permissions. Store the connection into a variable that can be referenced later.

If logging into the Wallaroo instance through the internal JupyterHub service, use wl = wallaroo.Client(). For more details on logging in through Wallaroo, see the Wallaroo SDK Essentials Guide: Client Connection.

# Login through local Wallaroo instance

wl = wallaroo.Client()

Create Workspace and Pipeline

We will create a workspace to manage our pipeline and models. The following variables will set the name of our sample workspace then set it as the current workspace.

The pipeline and model were created in a previous notebook, so we will retrieve the same workspace and pipeline.

workspace_name = f'biolabsworkspace'

pipeline_name = f'biolabspipeline'

model_name = f'biolabsmodel'

model_file_name = 'models/mitochondria_epochs_15.onnx'

workspace = wl.get_workspace(name=workspace_name, create_if_not_exist=True)

wl.set_current_workspace(workspace)

pipeline = wl.get_pipeline(pipeline_name)

pipeline

| name | biolabspipeline |

|---|---|

| created | 2024-04-16 17:45:11.920420+00:00 |

| last_updated | 2024-04-16 17:49:35.206242+00:00 |

| deployed | False |

| arch | x86 |

| accel | none |

| tags | |

| versions | 05c8bd8a-9e9e-4a7d-8b5e-60b90db17f37, c6cbdc9f-89f9-45ec-8c57-b8f53b97afc5, 96936bac-8412-4858-ab31-0dcf9de140cf, a28ee7d0-8067-4762-b030-12c0b566d745, 3d06942d-aaf9-4737-b955-944e7d5ef4ec, a14aa324-f8e7-4245-aba2-e494ce66db5f, 93427c46-e084-4e0e-98a3-01d8f7a12e51, ce375d49-cda7-4a6e-805a-735946d59f52, 9fac21be-a163-478e-a59f-eda0b00dd0c8 |

| steps | biolabsmodel |

| published | False |

Deploy the Pipeline

From here, we can go right into deploying the pipeline and prepare it for inferencing.

deployment_config = wallaroo.DeploymentConfigBuilder() \

.replica_count(1) \

.cpus(1) \

.memory("2Gi") \

.build()

pipeline.deploy(deployment_config = deployment_config)

| name | biolabspipeline |

|---|---|

| created | 2024-04-16 17:45:11.920420+00:00 |

| last_updated | 2024-04-16 17:51:14.275187+00:00 |

| deployed | True |

| arch | x86 |

| accel | none |

| tags | |

| versions | 894ef6c1-99e8-43e6-829b-095c74d00802, 05c8bd8a-9e9e-4a7d-8b5e-60b90db17f37, c6cbdc9f-89f9-45ec-8c57-b8f53b97afc5, 96936bac-8412-4858-ab31-0dcf9de140cf, a28ee7d0-8067-4762-b030-12c0b566d745, 3d06942d-aaf9-4737-b955-944e7d5ef4ec, a14aa324-f8e7-4245-aba2-e494ce66db5f, 93427c46-e084-4e0e-98a3-01d8f7a12e51, ce375d49-cda7-4a6e-805a-735946d59f52, 9fac21be-a163-478e-a59f-eda0b00dd0c8 |

| steps | biolabsmodel |

| published | False |

Create the Wallaroo Connection

The Wallaroo connection will point to the location of our high resolution images:

- One the cell photos to be retrieved

- The other “ground truth” masks that are mapped compared against the model’s predictions.

The images will be retrieved, then parsed into a series of 256x256 images.

image_connection_name = f'mitochondria_image_source'

image_connection_type = "HTTP"

image_connection_argument = {

'cell_images':'https://storage.googleapis.com/wallaroo-public-data/csa_demo/computer-vision/examples/medical/bio-labs/atl-lab/images/ms-01-atl-3-22-23_9-50.tiff',

'ground_truth_masks': 'https://storage.googleapis.com/wallaroo-public-data/csa_demo/computer-vision/examples/medical/bio-labs/atl-lab/masks/ms-01-atl-3-22-23_9-50-masks.tiff'

}

connection = wl.create_connection(image_connection_name, image_connection_type, image_connection_argument)

Retrieve Images

We’ll use our new connection to reach out, retrieve the images and store them locally for processing. The information is stored in the details() method for the connection, which is hidden by default when showing the connection, but the data can be retrieved when necessary.

inference_source_connection = wl.get_connection(name=image_connection_name)

display(inference_source_connection)

| Field | Value |

|---|---|

| Name | mitochondria_image_source |

| Connection Type | HTTP |

| Details | ***** |

| Created At | 2024-04-16T17:51:37.014995+00:00 |

| Linked Workspaces | [] |

patches_dict = tiff_utils.build_patches("downloaded_patches",

(256,256),

256,

inference_source_connection.details()['cell_images'],

inference_source_connection.details()['ground_truth_masks'] )

created dir downloaded_patches/ms-01-atl-3-22-23_9-50

saving file downloaded_patches/ms-01-atl-3-22-23_9-50/ms-01-atl-3-22-23_9-50.tiff

Retrieve Image and Convert to Data

The next step is to process the image into a numpy array that the model is trained to detect from.

We start by retrieving all the patch images from a recorded time series tiff recorded on one of our microscopes.

sample_mitochondria_patches_path = "./downloaded_patches/ms-01-atl-3-22-23_9-50"

patches = tiff_utils.get_all_patches(sample_mitochondria_patches_path)

Randomly we will retrieve a 256x256 patch image and use it to do our semantic segmentation prediction.

We’ll then convert it into a numpy array and insert into a DataFrame for a single inference.

The following helper function loadImageAndConvertTiff is used to convert the image into a numpy, then insert that into the DataFrame. This allows a later command to take the randomly grabbed image perform the process on other images.

def loadImageAndConvertTiff(imagePath, width, height):

img = cv2.imread(imagePath, 0)

imgNorm = np.expand_dims(normalize(np.array(img), axis=1),2)

imgNorm=imgNorm[:,:,0][:,:,None]

imgNorm=np.expand_dims(imgNorm, 0)

resizedImage = None

#creates a dictionary with the wallaroo "tensor" key and the numpy ndim array representing image as the value.

dictData = {"tensor":[imgNorm]}

dataframedata = pd.DataFrame(dictData)

# display(dataframedata)

return dataframedata, resizedImage

def run_semantic_segmentation_inference(pipeline, input_tiff_image, width, height, threshold):

tensor, resizedImage = loadImageAndConvertTiff(input_tiff_image, width, height)

# print(tensor)

# #

# # run inference on the 256x256 patch image get the predicted mitochandria mask

# #

output = pipeline.infer(tensor)

# print(output)

# # Obtain the flattened predicted mitochandria mask result

list1d = output.loc[0]["out.conv2d_37"]

np1d = np.array(list1d)

# # unflatten it

predicted_mask = np1d.reshape(1,width,height,1)

# # perform the element-wise comaprison operation using the threshold provided

predicted_mask = (predicted_mask[0,:,:,0] > threshold).astype(np.uint8)

# return predicted_mask

return predicted_mask

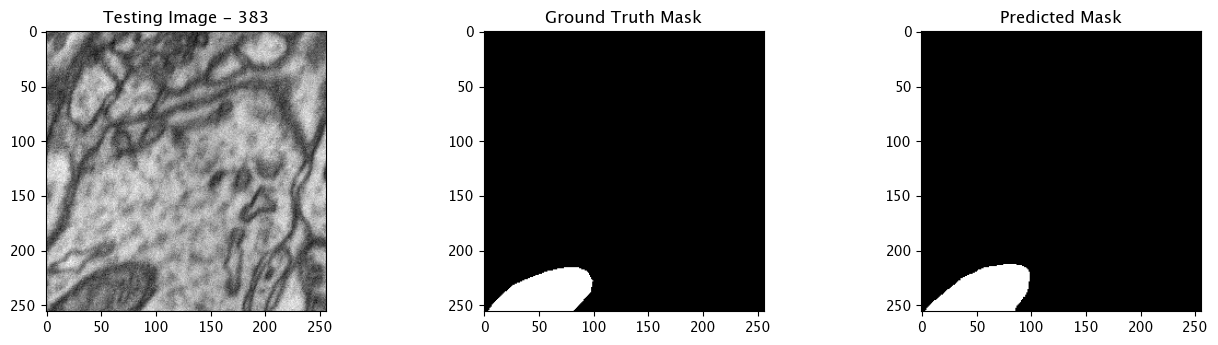

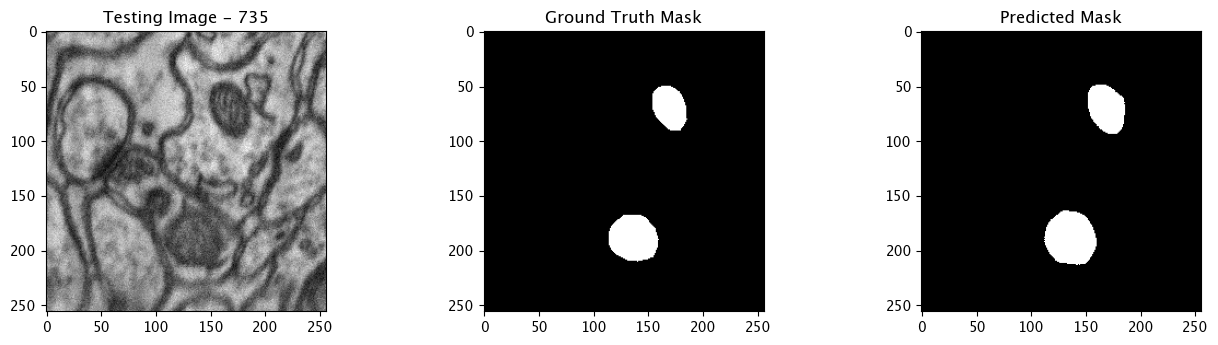

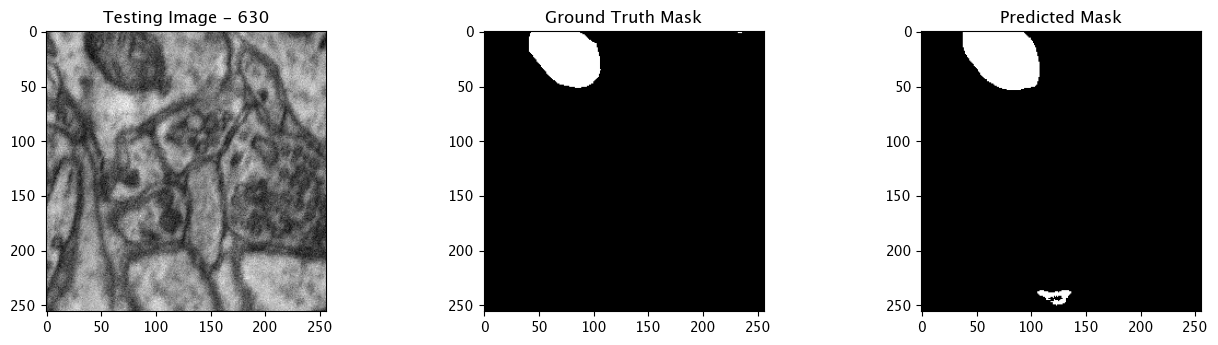

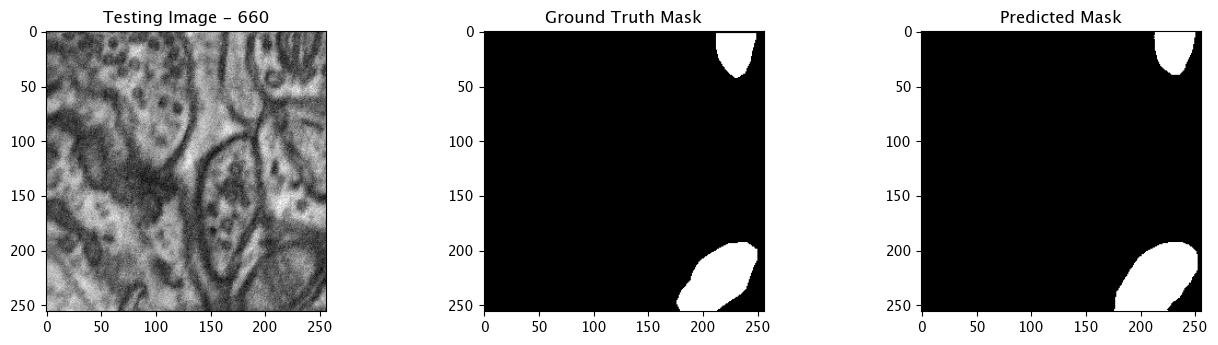

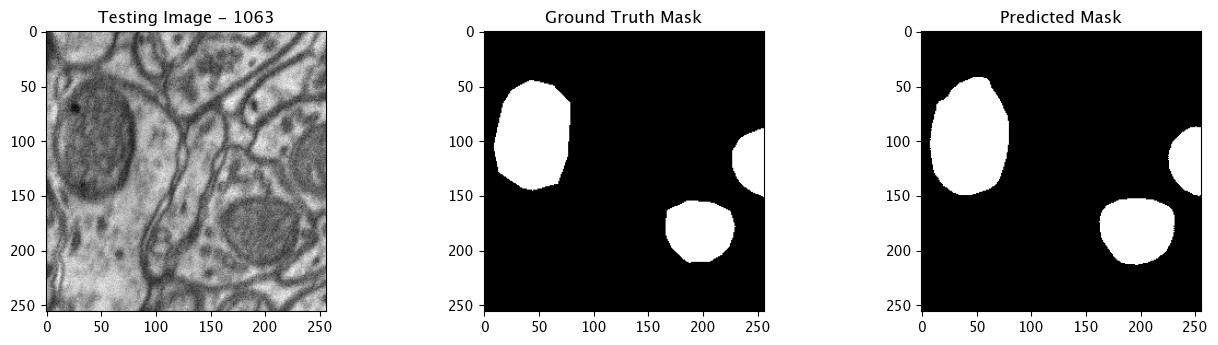

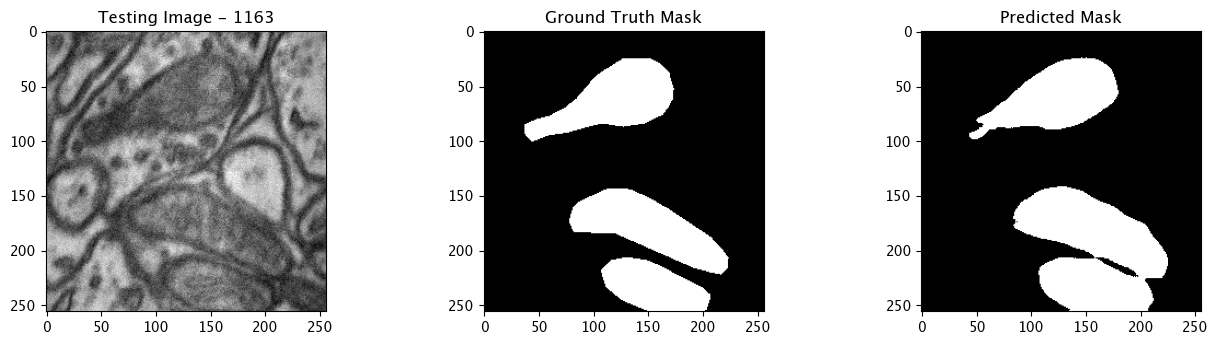

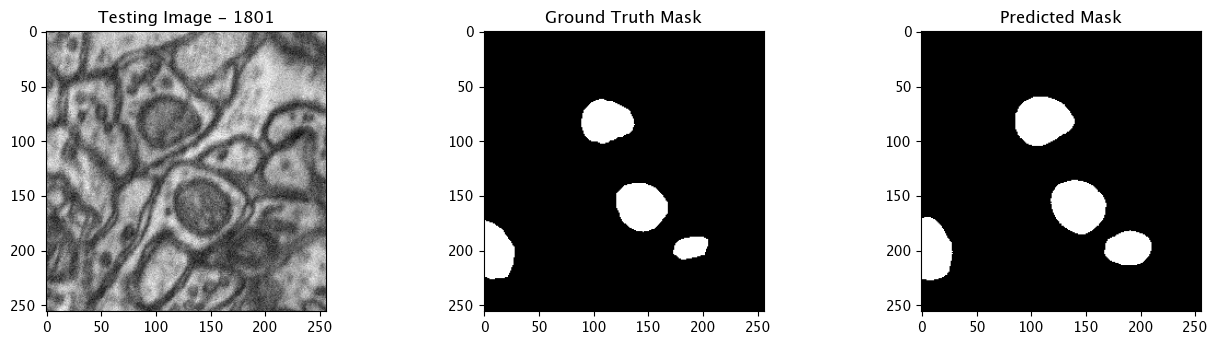

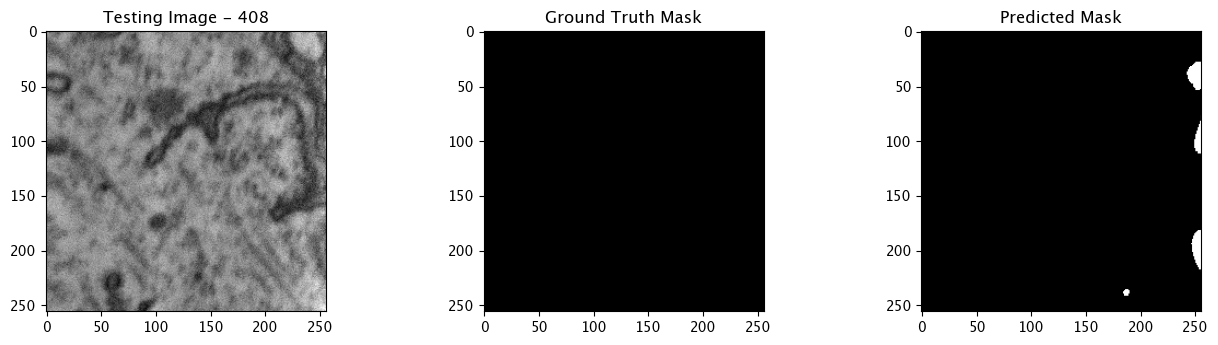

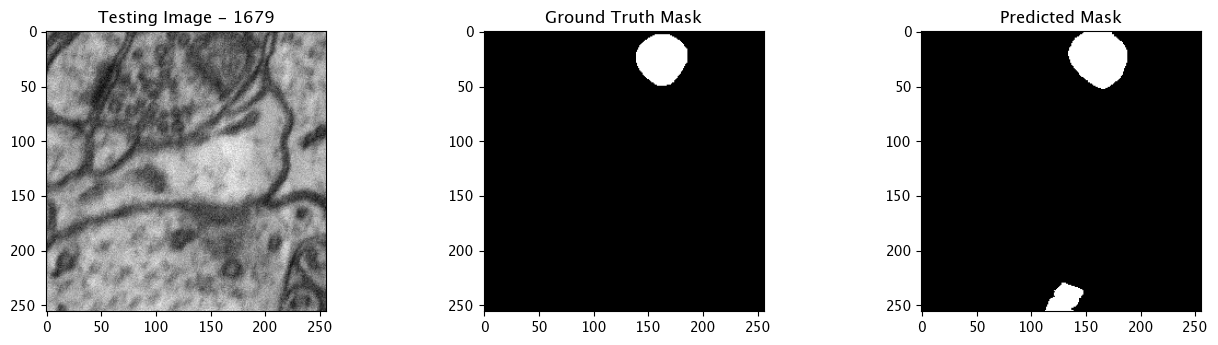

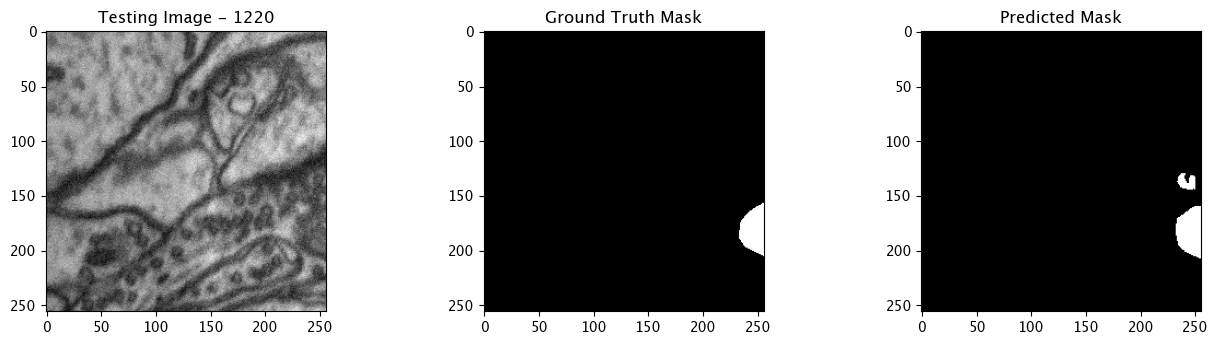

Infer and Display Results

We will now perform our inferences and display the results. This results in a predicted mask showing us where the mitochondria cells are located.

- The first image is the input image.

- The 2nd image is the ground truth. The mask was created by a human who identified the mitochondria cells in the input image

- The 3rd image is the predicted mask after running inference on the Wallaroo pipeline.

We’ll perform this 10 times to show how quickly the inferences can be submitted.

for x in range(10):

# get a sample 256x256 mitochondria image

random_patch = tiff_utils.get_random_patch_sample(patches)

# build the path to the image

patch_image_path = sample_mitochondria_patches_path + "/images/" + random_patch['patch_image_file']

# run inference in order to get the predicted 256x256 mask

predicted_mask = run_semantic_segmentation_inference(pipeline, patch_image_path, 256,256, 0.2)

# # plot the results

test_image = random_patch['patch_image'][:,:,0]

test_image_title = f"Testing Image - {random_patch['index']}"

ground_truth_image = random_patch['patch_mask'][:,:,0]

ground_truth_image_title = "Ground Truth Mask"

predicted_mask_title = 'Predicted Mask'

tiff_utils.plot_test_results(test_image, test_image_title, \

ground_truth_image, ground_truth_image_title, \

predicted_mask, predicted_mask_title)

Complete Tutorial

With the demonstration complete, the pipeline is undeployed and the resources returned back to the Wallaroo instance.

pipeline.undeploy()

| name | biolabspipeline |

|---|---|

| created | 2024-04-16 17:45:11.920420+00:00 |

| last_updated | 2024-04-16 17:51:14.275187+00:00 |

| deployed | False |

| arch | x86 |

| accel | none |

| tags | |

| versions | 894ef6c1-99e8-43e6-829b-095c74d00802, 05c8bd8a-9e9e-4a7d-8b5e-60b90db17f37, c6cbdc9f-89f9-45ec-8c57-b8f53b97afc5, 96936bac-8412-4858-ab31-0dcf9de140cf, a28ee7d0-8067-4762-b030-12c0b566d745, 3d06942d-aaf9-4737-b955-944e7d5ef4ec, a14aa324-f8e7-4245-aba2-e494ce66db5f, 93427c46-e084-4e0e-98a3-01d8f7a12e51, ce375d49-cda7-4a6e-805a-735946d59f52, 9fac21be-a163-478e-a59f-eda0b00dd0c8 |

| steps | biolabsmodel |

| published | False |